At the end of Chapter 5, we showed that \[

P(\text{10th spin is red} \mid \text{first 9 spins are red} ) = P(\text{10th spin is red}).

\] In other words, the fact that the first 9 spins were red gives no information about whether or not the 10th spin will be red. When the result of one event gives no information about the result of another, the two events are said to be independent. In this chapter, we define independence and explore its consequences.

Independence and its Properties

We formalize the above ideas in the following definition.

Definition 6.1 (Independence of two events) Two events \(A\) and \(B\) with positive probability are independent if either \[

P(A \mid B) = P(A)

\] or \[

P(B \mid A) = P(B).

\tag{6.1}\]

These two conditions are equivalent when \(P(A) > 0\) and \(P(B) > 0\). Otherwise, this would not be a valid definition.

To see why, note that the multiplication rule (Corollary 5.1) says \[P(A \cap B) = P(A)P(B \mid A) = P(B)P(A \mid B).\]

Now, if we assume the first condition, \(P(A \mid B) = P(A)\), then the second equality above simplifies to \[

P(A) P(B \mid A) = P(B) P(A).

\] Canceling \(P(A)\) from both sides (which is legal because we assumed \(P(A) > 0\)), we obtain \(P(B \mid A) = P(B)\), which is the second condition.

The proof that the second condition implies the first is similar.

While independence may be intuitively clear in simple cases like successive spins of a roulette wheel, other situations require us to check independence using Definition 6.1.

Example 6.1 (Betting on red and even) Suppose you make two bets, one on red and another on even, on a single spin of the roulette wheel. Are the two bets independent?

On a roulette wheel (Figure 1.5), there are \(18\) red numbers and \(18\) even numbers (0 and 00 do not count as even), but only \(8\) numbers that are both red and even. Therefore: \[

\begin{align*}

&P(\text{red} \mid \text{even} ) & \\

&= \frac{P(\text{red and even}) }{P(\text{even}) } \\

&= \frac{ 8/38 }{ 18/38 }\\

&= \frac{8}{18}.

\end{align*}

\] But this is not equal to \(P(\text{red}) = \frac{18}{38}\), so red and even are not independent.

An equivalent characterization of independence comes from the multiplication rule (Corollary 5.1): two events are independent when the probability of both happening is equal to the product of their individual probabilities. It is usually easier to check independence using this characterization.

Proposition 6.1 (Independence of two events) Two events \(A\) and \(B\) with positive probability are independent if and only if \[

P(A \cap B) = P(A)P(B).

\tag{6.2}\]

When either \(A\) or \(B\) has zero probability, then both sides of Equation 6.2 are zero. Because of this, probability zero events are considered to be independent of all other events.

Because the statement is an “if and only if” statement, we must show both directions. For both directions, we will use the multiplication rule (Corollary 5.1), which says \[P(A \cap B) = P(A)P(B \mid A). \tag{6.3}\]

[\(\Rightarrow\)] “Only if” direction:

Suppose that \(A\) and \(B\) are independent, so \(P(B \mid A) = P(B)\). Substituting this into Equation 6.3, we obtain \[ P(A \cap B) = P(A) P(B), \] as we wanted to show.

[\(\Leftarrow\)] “If” direction:

Suppose that \(P(A \cap B) = P(A) P(B)\). Substituting this into Equation 6.3, we obtain \[P(A) P(B) = P(A) P(B \mid A).\] Dividing both sides by \(P(A)\) shows that \(P(B) = P(B \mid A)\), which implies that \(A\) and \(B\) are independent.

We can use Proposition 6.1 to re-analyze Example 6.1.

Example 6.2 (Betting on red and even, revisited) We revisit Example 6.1, where we wanted to know if red and even in roulette are independent. Since

\[

P(\text{red and even}) = \frac{8}{38} \neq \frac{18}{38} \cdot \frac{18}{38} = P(\text{red})P(\text{even}),

\] the two events cannot be independent.

Independence is also an important concept in genetics. Its meaning in genetics derives from its meaning in probability, as we show in the next example. This example also illustrates how Proposition 6.1 can be used to calculate probabilities.

Example 6.3 (Mendel’s Law of Independent Assortment) In Example 1.6, we learned that albinism is caused by a mutation in the OCA2 gene on chromosome 15. Each person has two copies of this gene, which come in one of two versions (called alleles):

- \(A\): the version without the mutation

- \(a\): the mutated version that can cause albinism

A person only exhibits albinism if both copies of the gene are the mutated version (i.e., they have genetic makeup \(aa\)).

Now, suppose we are also interested in the MC1R gene on chromosome 16, which influences whether a person has freckles. Like OCA2, MC1R has two alleles:

- \(B\): the version without the mutation

- \(b\): the mutated version that causes freckles

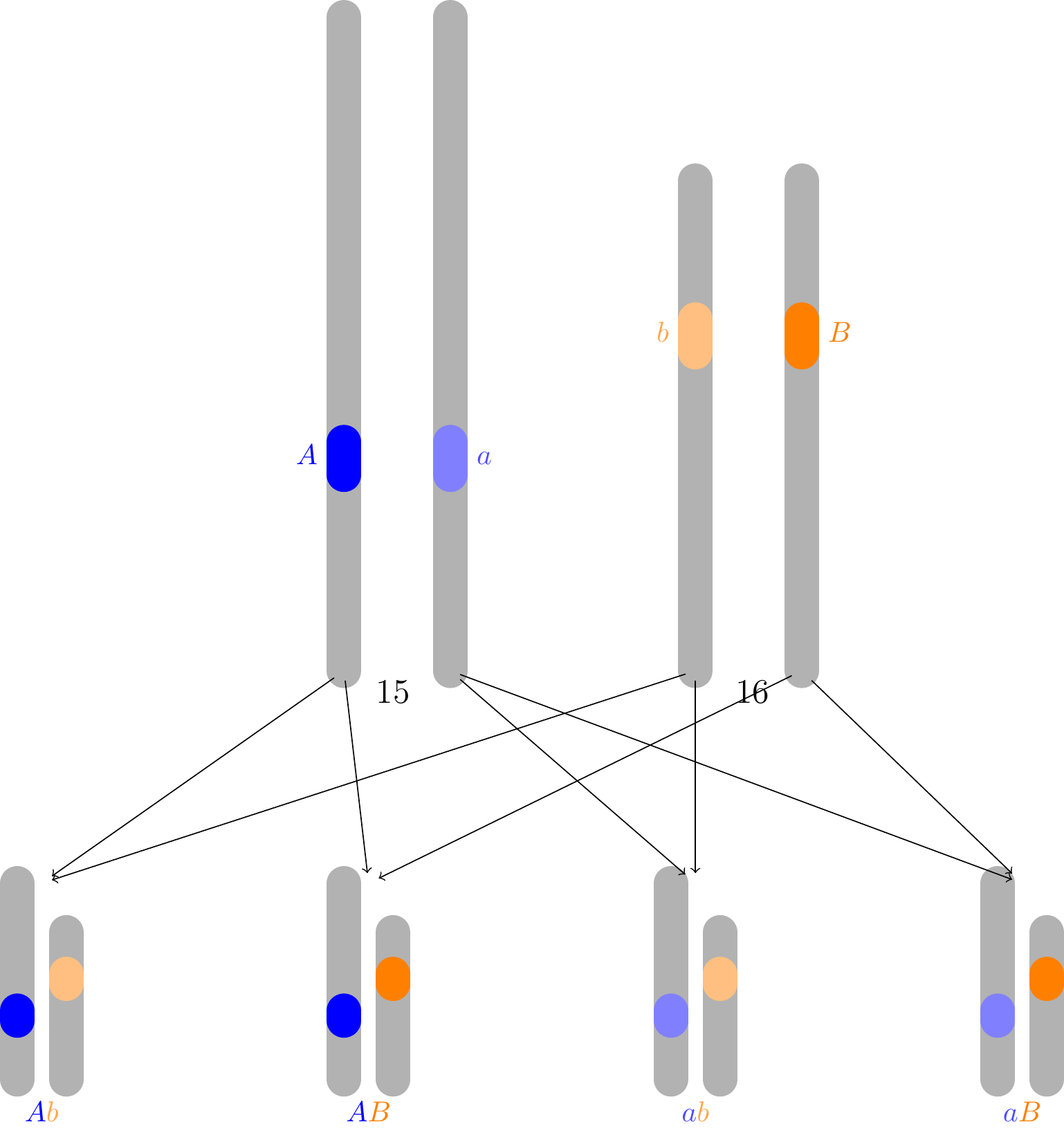

Mendel’s Law of Independent Assortment asserts that different genes are inherited independently. This turns out to be true only because the two genes are located on different chromosomes. Figure 6.1 shows two pairs of chromosomes for an individual who is a carrier of recessive alleles for both genes (i.e., with genetic makeup \(AaBb\)).

During cell division, one chromosome in each pair is randomly and independently selected for each sperm or egg cell. Therefore, the genes will also be chosen independently.

In Example 1.6, we saw that the probability that the child exhibits a recessive trait (\(aa\) or \(bb\)) is \(1/4\). Applying Proposition 6.1 to these probabilities, we obtain the following probabilities:

\[

\begin{align}

P(\text{child is not albino and not freckled}) &= P(\text{child is not albino}) P(\text{child is not freckled})\\

&= \frac{3}{4} \cdot \frac{3}{4} = \frac{9}{16} \\

P(\text{child is albino and not freckled}) &= P(\text{child is albino}) P(\text{child is not freckled})\\

&= \frac{3}{4} \cdot \frac{1}{4} = \frac{3}{16} \\

P(\text{child is not albino and freckled}) &= P(\text{child is not albino}) P(\text{child is freckled})\\

&= \frac{1}{4} \cdot \frac{3}{4} = \frac{3}{16} \\

P(\text{child is albino and freckled}) &= P(\text{child is albino}) P(\text{child is freckled})\\

&= \frac{1}{4} \cdot \frac{1}{4} = \frac{1}{16}

\end{align}

\]

In Mendel’s experiments, the subjects were pea plants, not humans. He studied two genes in pea plants, one that determines the seed color and another that determines the seed shape. (Here, “green” and “wrinkled” are the recessive alleles.) He recorded the following data on \(556\) offspring.

| yellow |

round |

\(315\) |

| green |

round |

\(108\) |

| yellow |

wrinkled |

\(101\) |

| green |

wrinkled |

\(32\) |

Notice how close these counts are to the 9:3:3:1 ratio predicted by the Law of Independent Assortment!

In many real-world problems, we do not just work with two independent events, but rather a collection of many independent events. As Definition 6.2 explains, a collection of events is independent when knowing that a sub-collection of them occurred does not change the probability of any other event in the collection.

Definition 6.2 (Independence of many events) A (possibly infinite) collection of positive probability events \(A_1, A_2, \dots\) is independent if the probability of any one of them happening does not change, given that some other finite sub-collection of them happened.

More concretely, for any two events,

\[

\begin{aligned}

P(A_i \mid A_j) &= P(A_i), \qquad i \neq j,

\end{aligned}

\tag{6.4}\] and for any three events, \[

\begin{aligned}

P(A_i \mid A_j, A_k) &= P(A_i), \qquad \text{$i$, $j$, $k$ distinct},

\end{aligned}

\tag{6.5}\] and for any four events,

\[

\begin{aligned}

P(A_i \mid A_j, A_k, A_{\ell}) &= P(A_i), \qquad \text{$i$, $j$, $k$, $\ell$ distinct},

\end{aligned}

\tag{6.6}\]

and so on.

There is a similar characterization of independence in terms of multiplication, analogous to Proposition 6.1, which is often more useful.

Theorem 6.1 (Independence of many events) A (possibly infinite) collection of positive probability events \(A_1, A_2, \dots\) is independent if and only if the probability of any finite sub-collection equals the product of the probabilities of the individual events in that sub-collection. That is, for any two events,

\[

\begin{aligned}

P(A_i \cap A_j) &= P(A_i)P(A_j), \qquad i \neq j,

\end{aligned}

\tag{6.7}\]

and for any three events,

\[

\begin{aligned}

P(A_i \cap A_j \cap A_k) &= P(A_i)P(A_j)P(A_k), \qquad \text{$i$, $j$, $k$ distinct},

\end{aligned}

\tag{6.8}\]

and for any four events,

\[

\begin{aligned}

P(A_i \cap A_j \cap A_k \cap A_\ell) &= P(A_i)P(A_j)P(A_k) P(A_\ell), \qquad \text{$i$, $j$, $k$, $\ell$ distinct},

\end{aligned}

\tag{6.9}\]

and so on.

Because the statement is an “if and only if” statement, we must show both directions. For both directions, we will use the general multiplication rule (Theorem 5.1), which says \[P(A_1 \cap A_2 \cap \dots \cap A_n) = P(A_1) P(A_2 \mid A_1) P(A_3 \mid A_1, A_2) \dots P(A_n \mid A_1, \dots A_{n-1}). \tag{6.10}\]

[\(\Rightarrow\)] “Only if” direction:

Suppose that the events are independent, meaning that the conditional probabilities satisfy Equation 6.4, Equation 6.5, and so on. Now, for any sub-collection, we can apply Equation 6.10 to obtain \[

\begin{align*}

P(A_{i_1} \cap A_{i_2} \cap \dots \cap A_{i_n}) &= P(A_{i_1}) P(A_{i_2} \mid A_{i_1}) \dots P(A_{i_n} \mid A_{i_1}, A_{i_2}, \dots, A_{i_{n-1}}) \\

&= P(A_{i_1}) P(A_{i_2}) \dots P(A_{i_n}),

\end{align*}

\] where we used the assumption of independence in the last step. This shows that the probabilities multiply.

[\(\Leftarrow\)] “If” direction:

Suppose that the probabilities multiply, as in Equation 6.7, Equation 6.8, and so on. Then Equation 6.4 holds by Proposition 6.1, and Equation 6.5 holds because \[

\begin{align*}

P(A_i)P(A_j)P(A_k) &= P(A_i \cap A_j \cap A_k) & \text{(assumed factoring)}\\

&= P(A_i) P(A_j \mid A_i)P(A_k \mid A_i, A_j) & \text{(multiplication rule)} \\

&= P(A_i) P(A_j) P(A_k \mid A_i, A_j). & \text{(previous result)}

\end{align*}

\] Dividing both sides by \(P(A_i)P(A_j)\), we get that \(P(A_k \mid A_i, A_j) = P(A_k)\). Now, we can show that Equation 6.6 and so on hold by induction. This proves that the events are independent in the sense of Definition 6.2.

Verifying that many events are collectively independent can be tricky. For example, even if all pairs of events in a collection are independent, the collection may not be independent as a whole. We ask you to construct a counterexample in Exercise 6.5.

Although the properties of independent collections are hard to characterize formally, the important properties are intuitive. For instance, if \(A_1, A_2, \dots\) is an independent collection, then new collections of events like \(E_1 = A_1^c\), \(E_2 = A_2 \cup A_3\), and \(E_3 = A_4 \cap A_5\) will also be independent—provided that no \(A_i\) appears in more than one of the \(E_j\). A general proof of this property is quite technical and beyond the scope of this book, but Exercise 6.6 invites you to check some particular instances of this fact.

Examples

Now, we work through several examples that use independence.

Example 6.4 (Cardano’s problem revisited) How many rolls of a fair die are needed to have a probability of at least \(0.5\) of rolling a six?

Let \(A_i\) be the event that the \(i\)th roll is not a six. Since dice rolls are independent, \(A_1, A_2, \dots\) are independent. Therefore, the probability of rolling a six in \(n\) rolls is

\[

\begin{align*}

P(\text{at least one six}) &= 1 - P(\text{no sixes}) & \text{(complement rule)}\\

&= 1 - P(A_1, \dots, A_n) & \text{(same event)}\\

&= 1 - P(A_1) \times \dots \times P(A_n) & \text{(independence)}\\

&= 1- \underbrace{\frac{5}{6} \times \dots \times \frac{5}{6}}_{n \text{ times}} & \text{(plug in probabilities)}\\

&= 1 - \left(\frac{5}{6}\right)^n

\end{align*}

\]

This agrees with our earlier answer from Example 4.1. As before, we can set this expression equal to \(.5\) and solve for \(n\) to see that \(n \geq 4\).

By assuming independence, we can often obtain more precise answers to questions. In the next example, we re-analyze Example 4.5 with the added assumption of independence.

Example 6.5 (Quality control for medical devices revisited) Suppose, as in Example 4.5, we are manufacturing batches of \(n=50\) medical devices. If each device we make has some failure probability \(p\), how low does \(p\) need to be to ensure that no devices fail with probability at least \(99\%\)?

We will assume that failures are due to one-off mishaps on the assembly line that have nothing to do with each other—that is, the failures of different devices are independent. Let \(A_i\) be the event that the \(i\)th device fails. Then, the events \(A_1, \dots, A_n\) are independent, as are their complements \(A_1^c, \dots, A_n^c\). Therefore:

\[

\begin{align*}

P(\text{no device fails}) &= P(A_1^c, \dots, A_{50}^c) \\

&= P(A_1^c) \times \dots \times P(A_{50}^c) & \text{(independence)}\\

&= (1-p)^{50} & \text{(earlier computation)}

\end{align*}

\]

Now, we want this probability to be at least \(.99\). Solving for \(p\), we obtain \[ p \leq 1 - (0.99)^{1/50} \approx 0.000201,\] which gives slightly more leeway than the worst-case analysis in Example 4.5, which required \(p \leq 0.0002\). However, the difference is small, showing that the worst-case analysis was not so far off after all.

Next, we show how independence can be invoked intuitively to simplify calculations.

Example 6.6 (Winning a pass-line bet for a specific point) Suppose that in a round of craps (Example 1.9), the point has been set at five. What is the probability the shooter wins the round? That is, what is the probability that they roll a five again before rolling a seven?

Each time the shooter rolls the dice, the result is either a five, a seven, or something else. If it is something else, then the round continues. Because the rolls are independent, the past rolls should not influence the current roll. Therefore, we can restrict our attention to the roll where the round ends. The probability that the shooter wins is the same as the probability that they roll a five, given that the round ends on the current roll. That is, \[

\begin{align*}

P(\text{shooter wins}) &= P(\text{roll a five} \mid \text{roll a five or a seven}) \\

&= \frac{P(\text{roll a five})}{P(\text{roll a five or seven})} & \text{(definition of conditional probability)}\\

&= \frac{P(\text{roll a five})}{P(\text{roll a five}) + P(\text{roll a seven})} & \text{(Axiom 3) }\\

&= \frac{4/36}{4/36 + 6/36} \\

&= \frac{4}{10}.

\end{align*}

\]

Notice how we appealed to independence to justify restricting attention to the current roll. However, to prove this rigorously, we have to consider all rolls after the come-out roll.

To compute the probability that the shooter wins, we break it down by roll. The shooter could win on the first roll (after the come-out roll), the second roll, and so on. \[

\begin{align}

P(\text{win} \mid \text{point is 5}) &= P\left( \bigcup_{n=1}^\infty \{ \text{win on $n$th roll} \} \, \Bigg| \, \text{point is 5}\right) \\

&= \sum_{n=1}^\infty P(\text{win on $n$th roll} \mid \text{point is 5}).

\end{align}

\] The summation is justified by Axiom 3 (applied to the conditional probability function \(\widetilde P(A) = P(A \mid \text{point is 5})\), since the events are mutually exclusive.

Now, we evaluate the conditional probability inside the sum. To do this, we will rewrite the event \(\{ \text{point is 5}, \text{win on $n$th roll} \}\) in terms of the dice rolls, which we know are independent. We will define:

- \(E_i\): the event that the \(i\)th roll is \(5\) or \(7\)

- \(F_i\): the event that the \(i\)th roll is \(5\)

for \(i=0, 1, 2, \dots\) (where \(i=0\) denotes the come-out roll). Note that rolls are independent, so \(E_i\) and \(F_i\) are independent of \(E_j\) and \(F_j\) for \(i \neq j\).

\[

\begin{align}

P(&\text{win on $n$th roll} \mid \text{point is 5}) \\

&= \frac{P(\text{point is 5}, \text{win on $n$th roll})}{P(\text{point is 5})} & \text{(def. of conditional probability)} \\

&= \frac{P(F_0, E_1^c, E_2^c, \dots, E_{n-1}^c, F_n)}{P(F_0)} & \text{(rewrite event)} \\

&= \frac{P(F_0) P(E_1^c) P(E_2^c) \dots P(E_{n-1}^c) P(F_n)}{P(F_0)} & \text{(independence of rolls)} \\

&= \Big(1 - \frac{10}{36}\Big)^{n-1} \frac{4}{36}. & (P(E_i) = \frac{10}{36}, P(F_n) = \frac{4}{36})

\end{align}

\]

Substituting this into the summation above, we obtain a geometric series: \[

\begin{align}

P(\text{win}\mid\text{point is 5}) &= \sum_{n=1}^\infty \left(1 - \frac{10}{36}\right)^{n-1} \frac{4}{36} \\

&= \frac{4}{36} \frac{1}{1 - (1 - \frac{10}{36})} \\

&= \frac{4}{10}.

\end{align}

\]

We can repeat the above argument for different come-out rolls to obtain the following table of conditional probabilities.

| 2 |

\(0\) |

| 3 |

\(0\) |

| 4 |

\(\frac{3}{9}\) |

| 5 |

\(\frac{4}{10}\) |

| 6 |

\(\frac{5}{11}\) |

| 7 |

\(1\) |

| 8 |

\(\frac{5}{11}\) |

| 9 |

\(\frac{4}{10}\) |

| 10 |

\(\frac{3}{9}\) |

| 11 |

\(1\) |

| 12 |

\(0\) |

Finally, we issue a cautionary tale about assuming independence.

Example 6.7 (Sally Clark case) An Englishwoman named Sally Clark experienced two back-to-back tragedies: in 1996, her first son died of sudden infant death syndrome (SIDS), and in 1998, her second son died under similar circumstances. She was arrested shortly afterwards and charged with murder.

At the trial, the pediatrician Roy Meadow testified that the probability of one child dying of SIDS was \(1 / 8543\), so the probability of two children dying of SIDS was \[

P(\text{1st child suffers SIDS}) \cdot P(\text{2nd child suffers SIDS}) = \frac{1}{8543} \cdot \frac{1}{8543} \approx \frac{1}{73 \text{ million}}.

\] On the basis of this testimony, Clark was convicted of murdering her two sons.

However, the Royal Statistical Society pointed out that Meadow’s calculation assumed independence, which was not justified for SIDS.

There may well be unknown genetic or environmental factors that predispose families to SIDS, so that a second case within the family becomes much more likely than would be a case in another, apparently similar, family.” (Green 2002)

In other words, the Royal Statistical Society argued that \[

P(\text{2nd child suffers SIDS} \mid \text{1st child suffers SIDS}) > P(\text{2nd child suffers SIDS}),

\] so it is inappropriate to multiply the individual probabilities as if they were independent.

On the basis of this argument, Clark’s conviction was overturned on appeal in 2003, after she had served over three years of her sentence. Her life was never the same after the false accusation, and she sadly died four years later of alcohol poisoning.

Conditional Independence

Sometimes events are independent, but only if we condition on some other event having happened. Such events are said to be conditionally independent.

Definition 6.3 (Conditional independence) We say that a collection of (possibly infinite) positive-probability events \(A_1, A_2, \dots\) is conditionally independent given \(B\), where \(P(B) > 0\), if they are independent under the conditional probability function \(\widetilde P(A) \overset{\text{def}}{=}P(A \mid B)\)—that is, \[

\widetilde P(A_{i_1} \cap A_{i_2} \cap \dots \cap A_{i_n}) = \widetilde P(A_{i_1}) \widetilde P(A_{i_2}) \dots \widetilde P(A_{i_n})

\tag{6.11}\] for any finite subcollection of \(n\) events.

Note that by Proposition 5.1, this is equivalent to

\[

P(A_{i_1} \cap A_{i_2} \cap \dots \cap A_{i_n} \mid B) = P(A_{i_1} \mid B) P(A_{i_2} \mid B) \dots P(A_{i_n} \mid B).

\]

The game craps provides an example of conditionally independent events.

Example 6.8 (Conditional independence in craps) The events \(\{ \text{win} \}\) and \(\{ \text{round ends on $n$th roll} \}\) are conditionally independent, given the event \(\{ \text{point is $5$} \}\), for any \(n = 1, 2, 3, \dots\).

To see this, we write the events in terms of the rolls, adopting the notation \(E_i\) and \(F_i\) from Example 6.6.

\[

\begin{align}

P(&\text{round ends on $n$th roll}, \text{win}\mid\text{point is 5}) \\

&= \frac{P(\text{point is 5}, \text{round ends on $n$th roll}, \text{win})}{P(\text{point is 5})} \\

&= \frac{P(F_0, E_1^c, E_2^c, \dots, E_{n-1}^c, F_n)}{P(\text{point is 5})} & \text{(rewrite event)} \\

&= \frac{P(F_0, E_1^c, E_2^c, \dots, E_{n-1}^c) P(F_n)}{P(\text{point is 5})} & \text{(independence of rolls)} \\

&= \frac{P(F_0, E_1^c, E_2^c, \dots, E_{n-1}^c) P(E_n) }{P(\text{point is 5})} \cdot \frac{P(F_n)}{P(E_n)} & \text{(multiply and divide by $P(E_n)$)} \\

&= \frac{P(F_0, E_1^c, E_2^c, \dots, E_{n-1}^c, E_n) }{P(\text{point is 5})} \cdot \frac{4/36}{10/36} & \text{(independence of rolls)} \\

&= \frac{P(\text{point is 5}, \text{round ends on $n$th roll})}{P(\text{point is 5})} \cdot \frac{4}{10} & \text{(rewrite event)} \\

&= P(\text{round ends on $n$th roll} \mid \text{point is 5}) P(\text{win} \mid \text{point is 5})

\end{align}

\]

In the last step, we used the result from Example 6.6, where we showed that \(P(\text{win} \mid \text{point is 5}) = \frac{4}{10}\).

The same argument can be used to show that the conditional independence holds for any value of the point.

However, if we do not condition on the point, then winning is no longer independent of how long the round lasts. Although the formal calculation is not easy, we can intuitively see that if a round lasts for many rolls, then the shooter is less likely to win (because a long round suggests that the point was a 4 or a 10, which have a lower probability of being rolled again).

One common misconception is that conditional independence implies (unconditional) independence, or vice-versa. In fact, neither is true. The next example illustrates conditionally independent events that are not independent. In Exercise 6.7, you are invited to devise counterexamples of independent events that are not conditionally independent.

Example 6.9 (COVID-19 antigen testing with one nurse) Suppose we selected a random New York resident around the end of March 2020 and wanted to know if they had COVID-19. We send them to a pop-up testing site where a nurse is administering an antigen test. These tests can randomly give incorrect readings due to manufacturing error and/or improper administration. To account for the possibility of an incorrect reading, the nurse administers two tests.

Considering the following five events,

- \(C_1\): the first test reading is correct,

- \(C_2\): the second test reading is correct,

- \(T_1\): the first test comes back positive,

- \(T_2\): the second test comes back positive,

- \(I\): the person is infected with COVID-19,

answer the following questions:

Q: Is it reasonable to assume that \(C_1\) and \(C_2\) are independent?

A: Yes. It’s certainly possible that slip-ups in manufacturing or administration could be due to random, one-off incidences. If so, then the correctness of one test does not inform us at all about the correctness of the other.

Q: Is it reasonable to assume that \(T_1\) and \(T_2\) are independent?

A: No. If one of the tests comes back positive, that should suggest an increased chance that the person actually has COVID-19. In turn, it should be more likely that the other test will also be positive.

Q: Is it reasonable to assume that \(C_1\) and \(C_2\) are conditionally independent given \(I\)?

A: Yes. If slip-ups in administration or mistakes in manufacturing are due to random, one-off incidences, then the person having COVID-19 will not change the fact that the correctness of one test does not inform us about the correctness of the other.

Q: Is it reasonable to assume that \(T_1\) and \(T_2\) are conditionally independent given \(I\)?

A: Yes. Conditional on the person having COVID-19, a positive test is the same as a correct test. We have already discussed why the tests’ correctness could be conditionally independent given that the person has COVID-19.

The next example builds on Example 6.9, showing that conditional independence can behave in counterintuitive ways. For example, even if events are conditionally independent given \(B\), they may not be conditionally independent given \(B^c\).

Example 6.10 (COVID-19 antigen testing with multiple nurses) Recall the COVID-19 testing example from Example 6.9, but now suppose that there are three nurses at our pop-up testing site. Two of the three nurses are experts, and their tests come back with the correct reading much more often than the third nurse, who is inexperienced and often administers the test incorrectly. The selected resident waits in line and is randomly helped by whichever nurse frees up first. Let \(N_1\) and \(N_2\) be the event that the first expert nurse and the second expert nurse administer the test, respectively, and let \(N_3\) be the event that the inexperienced nurse administers the test.

Recalling the events from Example 6.9, we ask and answer two questions:

Q: Is it reasonable to assume that \(C_1\) and \(C_2\) are conditionally independent given \(N_1\)?

A: Yes. Since the same expert nurse is administering both tests, this is like our setting in Example 6.9, and it is reasonable to think that correctness of one test has no influence on the correctness of the other.

Q: Is it reasonable to assume that \(C_1\) and \(C_2\) are conditionally independent given \(N_1^c\)?

A: No. Given \(N_1^c\) we know that either the other expert nurse or the inexperienced nurse is administering the tests, but we are not sure which one. If the first test is correct, it is more likely that the experienced nurse is administering the tests, and the second test should therefore also be more likely to be correct. Therefore, conditional on \(N_1^c\), \(C_1\) and \(C_2\) are not independent.

These examples show that conditional independence is a subtle, often fragile property—one we should assume with caution and apply with care.

Exercises

Exercise 6.1 (Fair coin from an unfair one) Suppose you have a coin with a probability \(p\neq .5\) of landing heads. How can you use this unfair coin to simulate a fair coin?

Here is one idea: flip the coin twice. If the two flips are the same, discard the tosses and try again. If the two flips are different, call “heads” if it was HT and “tails” if it was TH.

Show that this method simulates a fair coin.

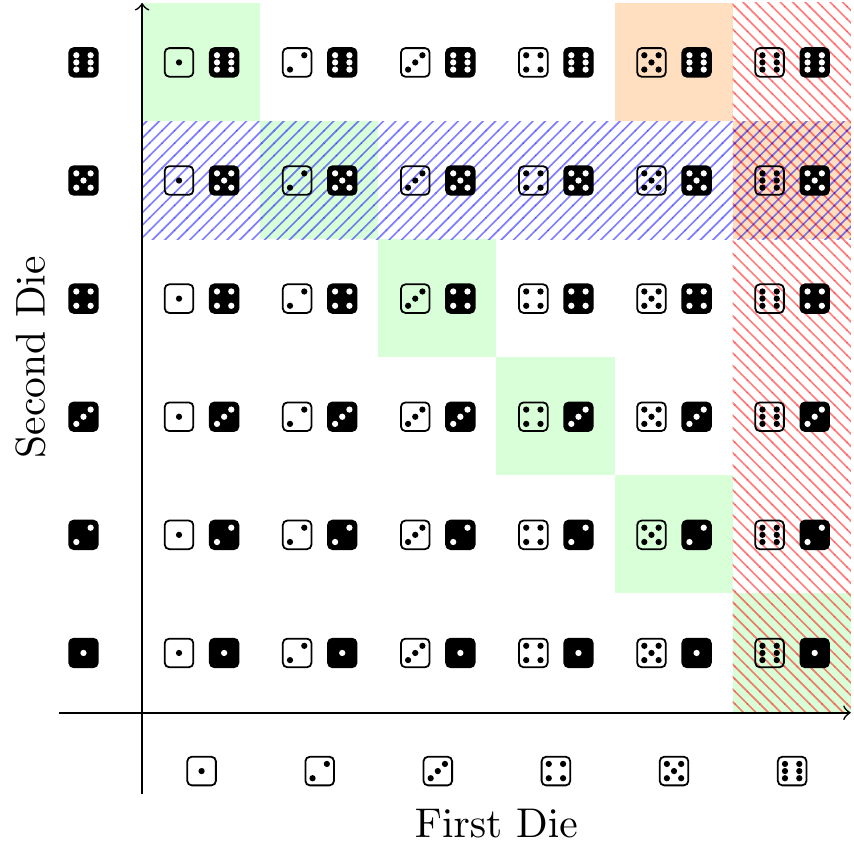

Exercise 6.2 (Rolling two dice and independence) Recall the experiment of rolling two fair dice, which has \(36\) equally likely outcomes in its sample space:

Considering the four events,

- \(A\): the first (white) die lands on \(6\)

- \(B\): the second (black) die lands on \(5\)

- \(C\): the rolled numbers sum to \(11\)

- \(D\): the rolled numbers sum to \(7\)

answer the following questions:

- Are \(A\) and \(B\) independent? Is this what you expected?

- Are \(A\) and \(C\) independent? Is this what you expected?

- Are \(A\) and \(D\) independent? Is this what you expected?

- Are \(C\) and \(D\) independent? Is this what you expected?

Exercise 6.3 Show that, if \(A\) and \(B\) are independent events, then

- \(A\) and \(B^c\) are independent,

- \(A^c\) and \(B\) are independent,

- \(A^c\) and \(B^c\) are independent.

Once you do a., you should be able to immediately use it to imply b. and c.

Exercise 6.4 Recall the experiment of rolling two fair dice from Exercise 6.2. What is the probability that first die does not land on \(6\) and the sum of the dice is also not 7? Rather than counting, use the results from Exercise 6.2 and Exercise 6.3 to come up with your answer.

Exercise 6.5 Come up with a collection of events where all the pairs of events are independent, but the collection is not independent as a whole.

Exercise 6.6 Suppose that \(A_1, A_2, \dots\) is a collection of independent events. Show that

- \(A_1 \cap A_2\) and \(A_3^c\) are independent

- \(A_1 \cup A_2\), \(A_3 \cap A_4^c\), and \(A_5\) is a collection of independent events.

- \(A_1, A_2^c, A_3, A_4^c, A_5, A_6^c, \dots\) is a collection of independent events.

- \(A_1\) and \(A_1^c \cap A_2\) are not necessarily independent events.

- \(A_1, A_1 \cup A_2, A_1 \cup A_2 \cup A_3, \dots\) is not necessarily a collection of independent events.

Exercise 6.7 Show that there exist events \(A\) and \(B\) that are independent, but not conditionally independent given a third event \(C\).

Exercise 6.8 Suppose that \(A\), \(B\), \(C\) are an independent collection of events and \(C\) has positive probability. Show that \(A\) and \(B\) are conditionally independent given \(C\).

Green, Peter. 2002. “Letter from the President to the Lord Chancellor Regarding the Use of Statistical Evidence in Court Cases, 23 January 2002.” In The Royal Statistical Society.